Introduction

This article was originally published on LinkedIn.

I have been working as an Azure solution architect for one of my clients for almost 2 years now. Back then, the ambition was set to re-envision their BizTalk integrations with cloud native components.

Since that moment, I have designed and tailored an architecture and implemented it with a great team of developers. An architecture that supports BizTalk integrations seamlessly and follows specific patterns and practices to get the best out of your integrations, while reducing complexity and optimizing maintainability.

While outlining the full architecture in detail would be beyond the scope of the topic I’d like to explain, some key points are outlined prior to diving in the actual problem.

Base integration architecture

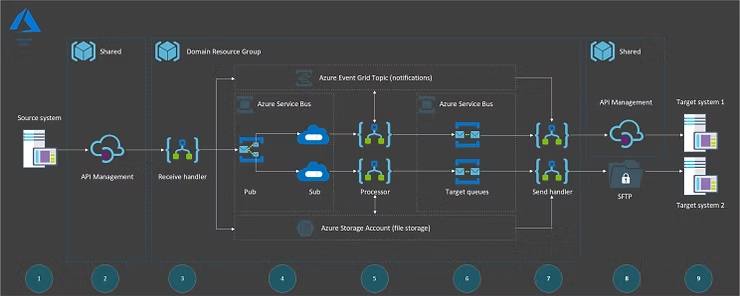

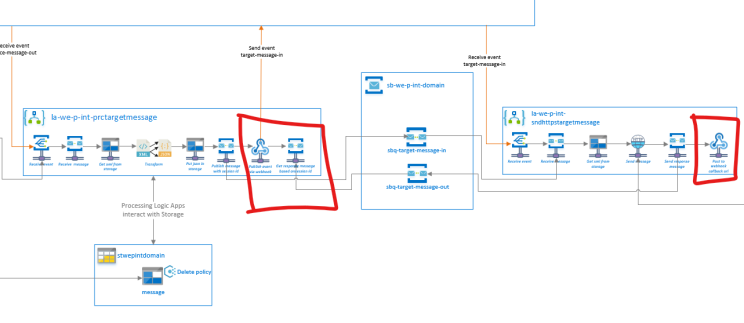

In essence, the base integration architecture looks as follows:

Steps 1 and 9 – Source or target system(s) that send or receive data, push or pull, based on a specific protocol.

Steps 2 and 8 are shared resources, like API Management, to expose APIs to the integration layer.

The integrations have been configured in Resource Groups that present a specific integration domain. This domain itself is covered through steps 3 to 7, and exists of:

- A Service bus namespace for the domain. Service bus is used as a persistency layer between receiver, processor and send channels. At the same time it guarantees delivery throughout the solution. Messages flowing through the bus are messages that follow the claim-check pattern, and therefore only contain a reference to the blob in the storage account container of that domain keeping the messages on the bus small. On the receive side, topics are used for routing to multiple subscribers and decoupling between processor and sender is done with queues (most of the time a 1-on-1 relation).

- A domain specific Event grid topic to support real time processing and an event driven architecture. We use a push/push approach instead of polling. Too much polling can be costly.

- A domain Storage account, that holds the actual message blob that is being exchanged through the chain.

- Receive Logic App(s), accountable for receiving messages, validating them, debatching into smaller messages in the chain, translate to a canonical model. etc.

- Processor Logic App(s), for orchestration and message transformation. If processing logic becomes too complex, we leverage Azure Functions to do message transformation or complex processing for us, instead of using BizTalk XSLT, Liquid transforms or complex chains of actions in Logic Apps. The Function result is then used in the Logic App for further processing.

- Send Logic App(s), that only sends messages to its target, based on a specific protocol.

Patterns and practices applied

- Guaranteed delivery

- Single responsibility

- Reliable messaging

- Persistency

- Decoupling

- Claim-Check

With these in place we have consistency, reusability, simplicity, maintainability, security, traceability and performance to our service.

Security

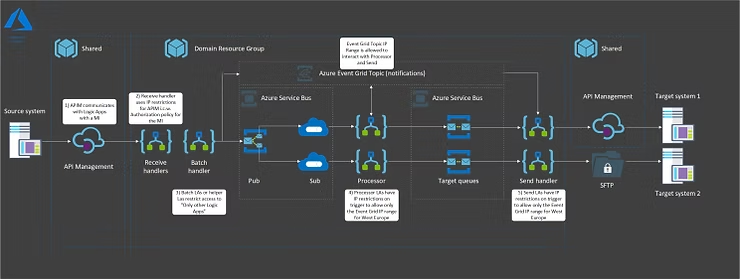

Security is an important topic, and therefore well addressed in the architecture:

- API Management through OAuth and in a VNet

- User Assigned Managed Identity is being used to control RBAC and secure all resources

- Receive Logic Apps are only accessible through API Management IP and Authorization policies that validates the caller

- Send Logic Apps can only be called through Event Grid IP ranges

- Event Grid, Service bus and Storage are only accessible with User Assigned Managed Identity

A typical implementation of the

architecture

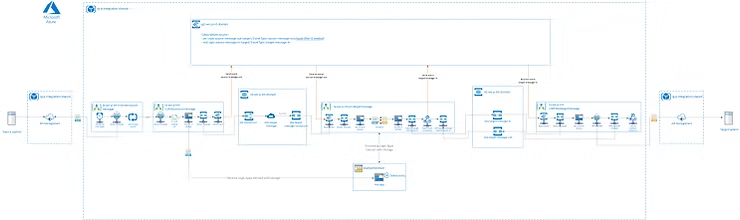

This is how a typical scenario would look like:

The challenge with request / response (with decoupling)

Applying a decoupled architecture introduces a challenge. Especially when on the processing side, where a response is needed from the target system for further processing.

Since we use an event driven architecture, we can only publish an event from the processor, which is picked up by the sender.

The sender in turn will call the target system and get a response. The question that rises is: how do we return the response to the processor, so it can be handled properly?

Service bus sessions with polling

Traditionally, the processor would initiate a message with a session id and send this to the send queue. The session id here is important, because the processor will pickup a message with the same session id from the response queue.

The sender does a request to a target system, receives a response, sends this response back to the response queue together with the same session id.

The processor keeps polling in a loop, until a response with the same session id is received.

The downside of this approach is that we need to continuously loop and check for a message with the same session id. Especially with integrations that have a high load, this will result in:

- High action/trigger costs for looping while asking the queue for the correct message

- Unnecessary complex logic needed to keep looping and actively looking for a message

- From an event driven push/push approach to a push/pull (poll) approach, which is not desired.

The downside of Event Grid Topics with Logic Apps

We would expect that Event grid could be leveraged here to pass an event back to the processor, to which the processor listens.

Unfortunately, Logic Apps do not support receiving of Event Grid events halfway a Logic App. Only receiving events can be used as a trigger to start a Logic App.

The solution: using a webhook with a callback URL to publish

Event Grid events

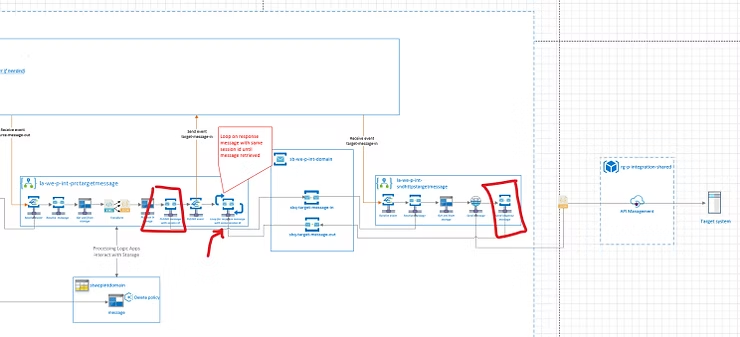

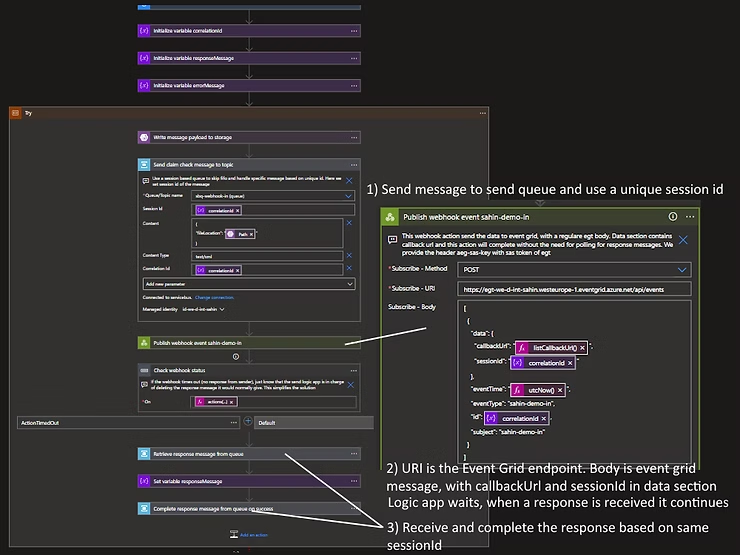

In my search to keep things simple and stick as close as possible to the base architecture, I came up with a solution that makes service bus polling for a response obsolete.

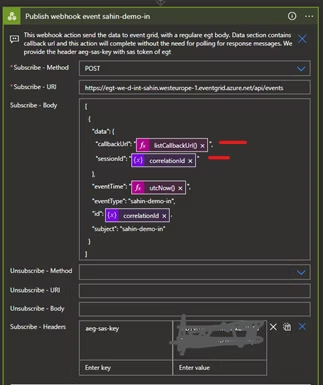

Instead of using the “Publish an event grid event” action in Logic apps, we can choose to use a Webhook action to publish an event to Event Grid endpoint. The webhook actions comes with a unique callback URL for a specific Logic App run which can be used in the data section of the event.

Together with the session id, it provides the sender will all necessary information needed to reply to the processor Logic App, without the need for the processor to poll for a response on the service bus queue.

The solution will now look as follows:

Additionally, the event data that is sent to Event Grid through the webhook looks like this. As you can see, the webhook just calls the Event Grid endpoint url, with a regular message that follows the Event Grid schema which contains properties for the session id and callback url.

Technical details: fitting the pieces

together

If we have a look at the complete solution, it could be something like the following…

The processor implementation

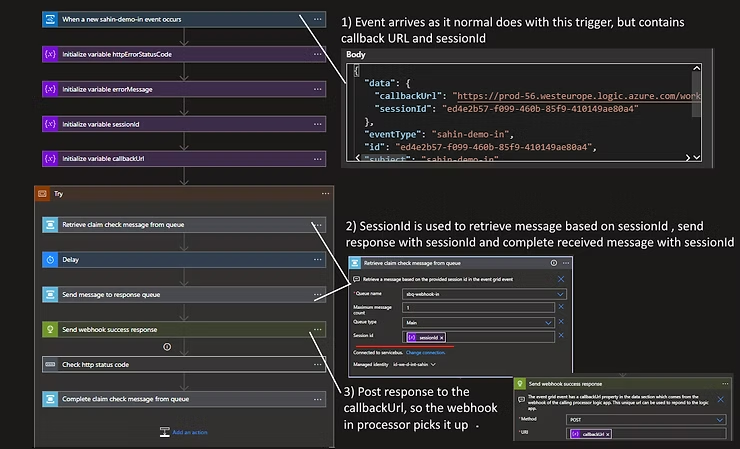

The sender implementation

Closing

Thanks for reading this post. I’m always curious for feedback, so please let me know what you think of the architecture and the solution for request/response scenarios.

If you’re interested on the nitty gritty details, don’t hesitate to contact me.

Best regards,

Sahin Ozdemir, Azure Solution Architect at Rubicon Cloud Advisor

Leave a Reply